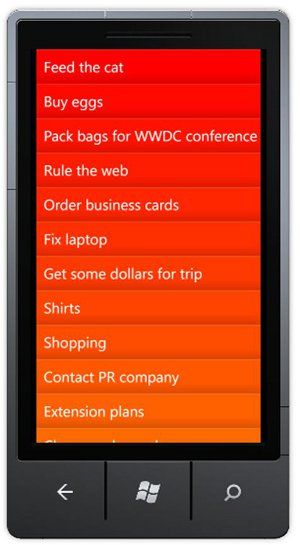

This blog post describes the implementation of a gesture-based todo-list application. The simple interface is controlled entirely by drag, flick and swipe:

So far the application supports deletion and completion of tasks, but not the addition of new ones. I'll get to this in a later blog post!

Introduction - gestures, why don't we use them more?

I think it is fair to say that most Windows Phone applications (and mobile applications in general) have user-interfaces that are a close reflection of how we interact with a desktop computer. Mobile applications have the same buttons, checkboxes and input controls as their desktop equivalent, with the user interacting with the majority of these controls via simple clicks / taps.

The mobile multi-touch interface allows for much more control and expression than a simple mouse pointer device. Standard gestures have been developed such as pinch/stretch, flick, pan, tap-and-hold, however these are quite rarely used; one notable exception being pinch/stretch which is the standard mechanism for manipulating images.When an application comes along that makes great use of gestures, it really stands out from the crowds. One such application is the iPhone 'Clear' application, a simple todo-list with not one button or checkbox in sight. You can see the app in action below:

Interestingly, its use of pinch to navigate the three levels of menu is similar to the Windows 8 concept of 'semantic zoom'.When I first saw Clear - the clean, clutter-free interface immediately spoke 'Metro' to me! This blog post looks at how to recreate some of the features of Clear using Silverlight for Windows Phone, in order to create a gesture-driven todo application.

NOTE: All of the work on my blog is under a Creative Commons Share-alike licence. For this blog post I just want to add that I do not want someone to take this code in order to release a 'Clear' clone on the Windows Phone marketplace. This blog post is for fun and education, to make people think more about the possibilities of gestures.

Rendering the to-do items

I haven't followed the MVVM pattern for this simple application (I don't need to unit test or collaborate with a designer!), but have followed the standard approach of using databinding. Each item is represented by an instance of the ToDoItem class which has properties of Text, Completed and Color and implemented INotifyPropertyChanged. A collection of these items is bound to an ItemsControl in order to render the list:

<ItemsControl ItemsSource="{Binding}" x:Name="todoList">

<ItemsControl.ItemTemplate>

<DataTemplate>

<Border Background="{Binding Path=Color, Converter={StaticResource ColorToBrushConverter}}">

<Grid>

<Grid.Background>

<!-- create a subtle gradient that overlays the background -->

<LinearGradientBrush EndPoint="0,1" StartPoint="0,0">

<GradientStop Color="#22FFFFFF"/>

<GradientStop Color="#00000000" Offset="0.1"/>

<GradientStop Color="#00000000" Offset="0.7"/>

<GradientStop Color="#22000000" Offset="1"/>

</LinearGradientBrush>

</Grid.Background>

<!-- task text -->

<TextBlock Text="{Binding Text}" Margin="15,15,0,15" FontSize="30"/>

</Grid>

</Border>

</DataTemplate>

</ItemsControl.ItemTemplate>

<ItemsControl.Template>

<ControlTemplate TargetType="ItemsControl">

<ScrollViewer>

<ItemsPresenter/>

</ScrollViewer>

</ControlTemplate>

</ItemsControl.Template>

</ItemsControl>The items control is template to host the ItemsPanel within a ScrollViewer, the default panel, a vertically-oriented StackPanel, is used here. The template to render each item is a simple Border, Grid and TextBlock. The code-behind iterates over the ToDoItem instances setting their Color property to produce a pretty looking gradient from red to orange, representing the priority of each item:

Handling gestures

Silverlight for Windows Phone provides manipulation events which you can handle in order to track when a user places one or more fingers on the screen and moves them around. Turning low-level manipulation events into high-level gestures is actually quite tricky. Touch devices give a much greater control when dragging objects, or flicking them, but have a much lower accuracy for the more commonplace task of trying to hit a specific spot on the screen. For this reason, gestures have a built in tolerance. As an example, a drag manipulation gesture is not initiated if the user's finger moves by a single pixel.

Fortunately, the Silverlight Toolkit contains a GestureListener which handles manipulation events and turns them into the standard gesture events for you. Unless you need a quite fancy gesture (two-finger-swipe for example), the GestureListener probably gives you all you need. To use this class, simply attach it to the element that you want to handle gestures on, then add handlers to the events that the GestureListener provides.

We'll add a GestureListener to the template that is used to render each ToDoItem:

<ItemsControl ItemsSource="{Binding}" x:Name="todoList">

<ItemsControl.ItemTemplate>

<DataTemplate>

<Border Background="{Binding Path=Color, Converter={StaticResource ColorToBrushConverter}}">

<!-- handle gestures for the ToDoItem element -->

<toolkit:GestureService.GestureListener>

<toolkit:GestureListener

DragStarted="GestureListener_DragStarted"

DragDelta="GestureListener_DragDelta"

DragCompleted="GestureListener_DragCompleted"/>

</toolkit:GestureService.GestureListener>

<Grid>

...

<!-- task text -->

<TextBlock Text="{Binding Text}" Margin="15,15,0,15" FontSize="30"/>

</Grid>

</Border>

</DataTemplate>

</ItemsControl.ItemTemplate>

...

</ItemsControl>The events produced by the GestureListener are used to indicate that a user has dragged their finger across the screen, but they do not actually move the element that the listener is associated with. We need to handle these events and move the element ourselves:

private void GestureListener_DragStarted(object sender, DragStartedGestureEventArgs e)

{

// initialize the drag

FrameworkElement fe = sender as FrameworkElement;

fe.SetHorizontalOffset(0);

}

private void GestureListener_DragDelta(object sender, DragDeltaGestureEventArgs e)

{

// handle the drag to offset the element

FrameworkElement fe = sender as FrameworkElement;

double offset = fe.GetHorizontalOffset().Value + e.HorizontalChange;

fe.SetHorizontalOffset(offset);

}

private void GestureListener_DragCompleted(object sender, DragCompletedGestureEventArgs e)

{

ToDoItemBounceBack(fe);

}But what are these mysterious methods, SetHorizontalOffset and GetHorizontalOffset in the above code? They are not found on FrameworkElement. The task of offsetting an element is reasonably straightforward via a TranslateTransform. However, once you set the RenderTransform of an element, it is converted to a MatrixTransform, so you cannot easily retrieve the value of the offset that has just been applied.

In order to hide this slightly messy implementation, I have created a couple of extension methods that use FrameworkElement.Tag to store the current offset:

public static void SetHorizontalOffset(this FrameworkElement fe, double offset)

{

var trans = new TranslateTransform()

{

X = offset

};

fe.RenderTransform = trans;

fe.Tag = new Offset()

{

Value = offset,

Transform = trans

};

}

public static Offset GetHorizontalOffset(this FrameworkElement fe)

{

return fe.Tag == null ? new Offset() : (Offset)fe.Tag;

}

public struct Offset

{

public double Value { get; set; }

public TranslateTransform Transform { get; set; }

}Yes, I know, Tag is never an elegant solution, but at least the above code is hidden behind extension methods, so feel free to replace with a more elegant implementation using attached properties if you wish!

When the drag stops we want the item to bounce back into place:

private void ToDoItemBounceBack(FrameworkElement fe)

{

var trans = fe.GetHorizontalOffset().Transform;

trans.Animate(trans.X, 0, TranslateTransform.XProperty, 300, 0, new BounceEase()

{

Bounciness = 5,

Bounces = 2

});

}Animate is another extension method which I created in order to quickly create DoubleAnimations for the properties of an element:

public static void Animate(this DependencyObject target, double from, double to,

object propertyPath, int duration, int startTime,

IEasingFunction easing = null, Action completed = null)

{

if (easing == null)

{

easing = new SineEase();

}

var db = new DoubleAnimation();

db.To = to;

db.From = from;

db.EasingFunction = easing;

db.Duration = TimeSpan.FromMilliseconds(duration);

Storyboard.SetTarget(db, target);

Storyboard.SetTargetProperty(db, new PropertyPath(propertyPath));

var sb = new Storyboard();

sb.BeginTime = TimeSpan.FromMilliseconds(startTime);

if (completed != null)

{

sb.Completed += (s, e) => completed();

}

sb.Children.Add(db);

sb.Begin();

}With the above code in place, we can drag items to one side or the other, then release and watch them bounce back into place.

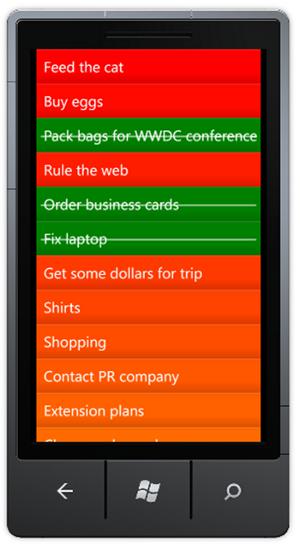

Mark as complete

When an item is dragged sufficiently far to the right, we'd like to have it marked as complete. To implement this, we'll check how far an item was dragged when the user releases their finger. If it was dragged more than half way, we'll mark the item as complete:

private void GestureListener_DragCompleted(object sender, DragCompletedGestureEventArgs e)

{

FrameworkElement fe = sender as FrameworkElement;

if (e.HorizontalChange > fe.ActualWidth / 2)

{

ToDoItemCompletedAction(fe);

}

else

{

ToDoItemBounceBack(fe);

}

}

private void ToDoItemCompletedAction(FrameworkElement fe)

{

// set the ToDoItem to complete

ToDoItem completedItem = fe.DataContext as ToDoItem;

completedItem.Completed = true;

completedItem.Color = Colors.Green;

// bounce back into place

ToDoItemBounceBack(fe);

}The bindings take care of updating the UI so that our item is now green. I also added a Line element which has its Visibility bound to the Completed property of the ToDoItem:

Deleting an item

If instead the use slides the item to the left we'd like to delete it. The DragCompleted event handler can easily be extended to check whether the item was dragged more than half-way across the screen in the other direction. The method that performs the deletion is shown below:

private void ToDoItemDeletedAction(FrameworkElement deletedElement)

{

var trans = deletedElement.GetHorizontalOffset().Transform;

trans.Animate(trans.X, -(deletedElement.ActualWidth + 50),

TranslateTransform.XProperty, 300, 0, new SineEase()

{

EasingMode = EasingMode.EaseOut

},

() =>

{

// find the model object that was deleted

ToDoItem deletedItem = deletedElement.DataContext as ToDoItem;

// determine how much we have to 'shuffle' up by

double elementOffset = -deletedElement.ActualHeight;

// find the items in view, and the location of the deleted item in this list

var itemsInView = todoList.GetItemsInView().ToList();

var lastItem = itemsInView.Last();

int startTime = 0;

int deletedItemIndex = itemsInView.Select(i => i.DataContext)

.ToList().IndexOf(deletedItem);

// iterate over each item

foreach (FrameworkElement element in itemsInView.Skip(deletedItemIndex))

{

// for the last item, create an action that deletes the model object

// and re-renders the list

Action action = null;

if (element == lastItem)

{

action = () =>

{

// clone the list

var items = _todoItems.ToList();

items.Remove(deletedItem);

// re-populate our ObservableCollection

_todoItems.Clear();

_todoItems.AddRange(items);

UpdateToDoColors();

};

}

// shuffle this item up

TranslateTransform elementTrans = new TranslateTransform();

element.RenderTransform = elementTrans;

elementTrans.Animate(0, elementOffset, TranslateTransform.YProperty, 200, startTime, null, action);

startTime += 10;

}

});

}There's actually rather a lot going on in that method. Firstly the deleted item is animated so that it flies off the screen to the left. Once this animation is complete, we'd like to make the items below 'shuffle' up to fill the space. In order to do this, we measure the size of the deleted items, then iterate over all the items within the current view, that are below the deleted item, and apply an animation to each one. The code makes use of the GetItemsInView extension method that I wrote for the WP7 JumpList control - it returns a list of items that are currently visible to the user, taking vertical scroll into consideration.

Once all the elements have shuffled up, our UI now contains a number of ToDoItems that have been 'artificially' offset. Rather than try to keep track of how each item is offset, at this point we force the ItemsControl to re-render the entire list.

The result looks pretty cool ...

Part of the power of manipulations is their 'organic' feel, which is why inertia and the feeling of friction are important considerations. With the current to-do application, when the user releases an item after dragging it, the item springs back to its original positions, as if it were tether via a piece of elastic. The user needs to 'pull' the item past the half way mark in order to invoke a delete / complete operation. However, in order to make the interaction feel more 'organic' the user should be able to give the todo item a short, fast flick, giving the item enough momentum to pass the critical point. In order to support this we can use the GestureListener.Flick event:

We could do a bit of physics, creating a spring-constant, give our items a nominal mass and determine whether they have ben flicked with a 'critical velocity'. However, for such a simple application, I'm happy to just come up with a velocity constant, that if passed results in the delet or complete action:

private static double FLICK_VELOCITY = 2000.0;

private void GestureListener_Flick(object sender, FlickGestureEventArgs e)

{

FrameworkElement fe = sender as FrameworkElement;

if (e.HorizontalVelocity < -FLICK_VELOCITY)

{

_flickOccured = true;

ToDoItemDeletedAction(fe);

}

else if (e.HorizontalVelocity > FLICK_VELOCITY)

{

_flickOccured = true;

ToDoItemCompletedAction(fe);

}

}Contextual cues

My friend Graham Odds wrote a great post on the use of contextual cues within user-interface design, which are subtle effects that in Graham's words "can be invaluable in effectively communicating the functionality and behaviour of our increasingly complex user interfaces".

The todo-list application uses gestures to delete / complete an item, however, these are not common use interactions so it is likely that the user would have to experiment with the application in order to discover this functionality. They would most likely have to first delete a todo-item by mistake before understanding how to perform a deletion, which could be quite frustrating!

In order to help the user understand the slightly novel interface, we'll add some very simple contextual cues. In the XAML below a Canvas has been added to the item template, this enables us to position a cross and a tick outside of the visible screen, one to the left and one to the right:

<Grid>

<!-- add a subtle gradient over the background color for this item -->

...

<!-- task text -->

<TextBlock Text="{Binding Text}" Margin="15,15,0,15" FontSize="30"/>

<!-- the strike-through that is shown when a task is complete -->

<Line Visibility="{Binding Path=Completed, Converter={StaticResource BoolToVisibilityConverter}}"

X1="0" Y1="0" X2="1" Y2="0"

Stretch="UniformToFill"

Stroke="White" StrokeThickness="2"

Margin="8,5,8,0"/>

<!-- a tick and a cross, rendered off screen -->

<Canvas Opacity="0" x:Name="tickAndCross" >

<TextBlock Text="×" FontWeight="Bold" FontSize="35"

Canvas.Left="470" Canvas.Top="8"/>

<TextBlock Text="✔" FontWeight="Bold" FontSize="35"

Canvas.Left="-50" Canvas.Top="8"/>

</Canvas>

</Grid>In the code-behind, we can locate this Canvas element using Linq-to-VisualTree, then set the opacity so that it fades into view, with the tick and cross elements becoming more pronounced the further the user swipes:

private void GestureListener_DragStarted(object sender, DragStartedGestureEventArgs e)

{

// initialize the drag

FrameworkElement fe = sender as FrameworkElement;

fe.SetHorizontalOffset(0);

_flickOccured = false;

// find the container for the tick and cross graphics

_tickAndCrossContainer = fe.Descendants()

.OfType<Canvas>()

.Single(i => i.Name == "tickAndCross");

}

private void GestureListener_DragDelta(object sender, DragDeltaGestureEventArgs e)

{

// handle the drag to offset the element

FrameworkElement fe = sender as FrameworkElement;

double offset = fe.GetHorizontalOffset().Value + e.HorizontalChange;

fe.SetHorizontalOffset(offset);

_tickAndCrossContainer.Opacity = TickAndCrossOpacity(offset);

}

private double TickAndCrossOpacity(double offset)

{

offset = Math.Abs(offset);

if (offset < 50)

return 0;

offset -= 50;

double opacity = offset / 100;

opacity = Math.Max(Math.Min(opacity, 1), 0);

return opacity;

}

With that subtle visual effect, the first iteration of my gesture-driven to-do application is complete. I'll add new features, such as drag-to-reorder, and the ability to add / edit items in the near future, again, all driven by gestures.

You can download the code here: ClearStyle.zip

Update: Read more about this application in part two, where I add drag re-ordering.

Regards, Colin E.