AKA ‘How to use every experimental web technology you can think of to re-implement a bedtime story’

This post is the first in a series about my latest project, Narration.studio (GitHub). In this post, we simply introduce it, discuss the motivations behind the project, and give a top-level view of how it all works. In the next posts, we’ll deep-dive into the more technical aspects of the project.

Click the links below to jump to the other parts.

- Introduction

- Markdown Parsing (Coming Soon)

- Speech Recognition (Coming Soon)

- Audio Processing (Coming Soon)

- High-Performance Rendering (Coming Soon)

For best results, listen to me read you this post instead! Just play the audio and the page will automatically scroll in sync. After all, don’t you want to see the fruits of my labour?

Background

When I wrote my last blog post about ergonomic keyboards, I decided at the last minute that it would be nice if there was a narration you could listen to, since it was very story-like. Without putting much thought into it, I loaded up Audacity and started recording myself reading the script. It’s not the first time I’ve done something like that - I’ve been involved in podcasts in the past so it was basically muscle memory.

When I was done with the recording, I had to start editing it.

I cut out the bad takes, deleted the bit where my cat walked in screaming, and tidied up the pauses between sentences.

Then, I listened to the whole thing at 2x speed and made a note of when each paragraph started.

I manually translated those timestamps into <div>s, annotating the script so it knew when to highlight each paragraph.

What I’m trying to say here is that it was incredibly manual. It only took me an hour or two in total, but those were some very boring minutes of my life. There was only one thing on my mind:

Surely there’s a way to automate it and save time?

That thought bounced around inside my skull until one day, I woke up in a cold sweat with little memory of those days prior. Two weeks had passed. Narration.studio was released.

What does it do?

We know that it automates your narration, but what does that mean in practice? Well, I could do a write-up, but there’s already one on the home page so I’m just going to steal that. Here’s how to use Narration.studio:

Step 1: Enter the script.

- Plain Text / CommonMark / GitHub Flavored Markdown

- Supports links, images, tables, code blocks, and more!

Step 2: Record the script

- Read each line as it’s shown to you

- Don’t like your delivery? Just say the last line again!

- Got distracted and said something not in the script? It’ll get cut!

- 5 second pause? 10 minute pause? It’ll get cut!

- No manual input required, everything works with speech recognition

Step 3: Edit the recording

- Listen to the auto-edited recording

- Adjust the start and end of each clip

- Auto-saves, so leave and come back later!

- Grab the script annotated with audio timestamps

- Download your edited audio as a high quality .wav file

How does it work?

Those are the important bits from a user’s perspective, but which bits were the hardest to implement? The project may look simple at a glance, but once you peel back the curtain there’s a whole host of interesting problems that needed to be solved. It has the amusing effect of making the project really impressive to other developers but kinda underwhelming to everyone else!

On top of the hard Computer Science problems to be solved, I had the added complexity of building it as a frontend-only web app. That was better from a privacy perspective, but more importantly meant it was completely free to host. It’s probably no surprise to anyone that’s seen my recent tech talks and other blogs, but I picked Svelte as the project’s front-end framework. On top of that, I used every experimental web technology imaginable, including:

- Speech Recognition API

- Web Audio API

- MediaDevices API

- OffscreenCanvas

- WebGL2

- IndexedDB

Let’s talk a bit more about where that technical complexity came into play. To me, there were 4 main areas. Each one is getting its own blog post, but here’s a summary:

Part 2: Markdown Parsing

The whole point of Narration.studio was to automate the process of recording voiceovers for my blog posts. Since I write them in Markdown, I really wanted to be able to use that in the script to remove the need for any manual pre-processing.

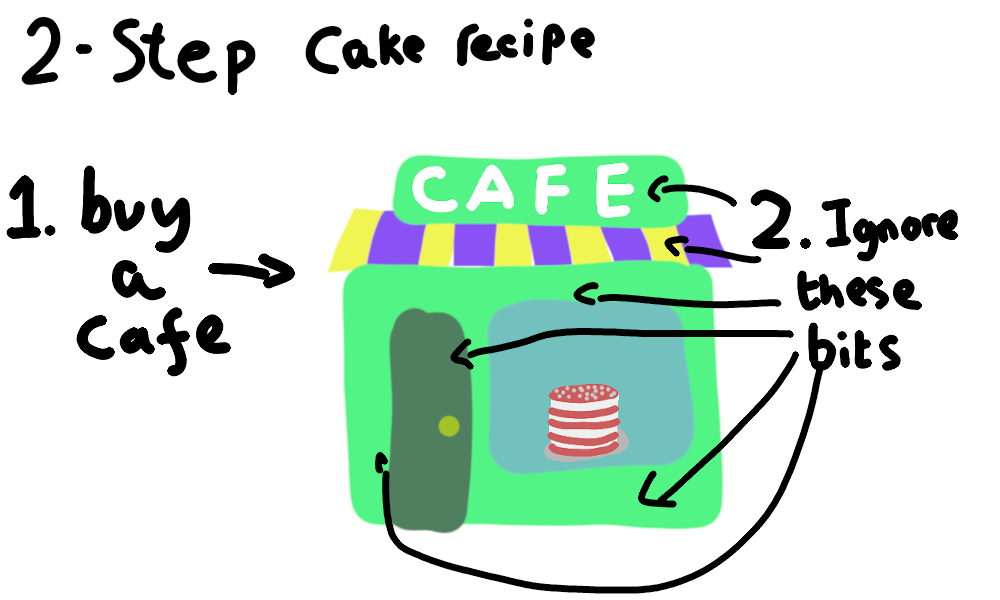

Given a Markdown script, we need to know where the start and end of each sentence is. We also need to determine which bits should be read aloud, and which bits are just for formatting or metadata. How can we make sure the user only has to read the text, and not links and other non-text bits?

You can probably see where this is going. Yes, I started with regular expressions. And yes, I did eventually give up and use a Markdown lexer. That’s not to say it solved the problem though - there was still a lot of post-processing needed.

In part 2 (coming soon), we see once again why you shouldn’t try to write your own parser in regex.

Part 3: Speech Recognition

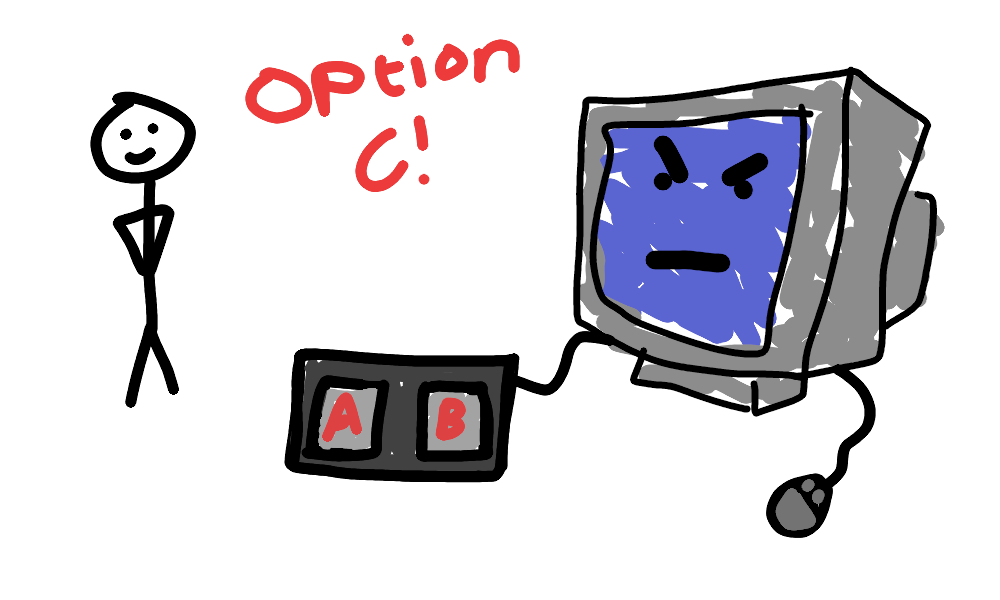

I didn’t want any manual input during the recording process. The site knows the script and knows what the user could be saying, and is already recording the mic. Can’t we use that information to automatically determine which line was said, and when?

The answer is yes - there is an experimental Speech Recognition API in recent Chromium-based browsers, and it works seamlessly. However, the API just returns the sentence it thinks you said. That brings a whole host of new problems.

What happens when the recognised speech doesn’t perfectly match any of the lines in the script? What happens when two words sound too similar to distinguish? How can you determine how similar two sentences sound?

Dealing with those problems, I finally got a chance to use that advanced algorithms module I suffered through at uni. Join me on that journey in part 3 (coming soon), where we explore the strange intersection between Speech Recognition websites and 1960s mathematical theory.

Part 4: Audio Processing

Shockingly, web browsers weren’t designed for audio production. How can we ignore that inconvenient fact, and record lossless audio in a browser? Once we’ve done that, how can we edit it in the browser, keeping that lossless quality? How can we post-process it to improve the quality in a generic way that works for everyone?

The Speech Recognition API gives us an approximate start and end time for each sentence, but how can we get it exact? We have the audio recorded, so we can base the timings on that, but how can you tell the difference between speech and silence? How do you deal with background noise?

In part 4 (coming soon), we go on an in-depth journey through the Web Audio API, putting everything I learnt writing MuseTree to use.

Part 5: High-Performance Rendering

On the editor screen, the waveform is a visual indication of the audio, making it easier to edit the start and end of each snippet accurately. However, that’s not as simple as it sounds.

Uncompressed audio is around 1MB of data every 10 seconds. The sheer quantity of data causes a lot of issues - or at least I thought it would. In a classic tale of premature optimisation, I went through a few different approaches trying to minimise the amount of rendering that needs to happen.

In the end, guess what? Turns out it’s way faster to just throw all the data at the GPU using WebGL and brute-force your way to victory! It’s not perfect, but it’s good enough.

Part 5 (coming soon) is dedicated to this topic, walking through a few of my failed attempts to optimise the rendering code before discussing what I ended up with. We’ll explore the strengths and weaknesses of my approach, before introducing a few optimisations that might actually be necessary.

Conclusion

It took me a while, and it was a lot harder than I expected, but I’m happy with the finished product. I mean, clearly - I used it for this post! It works consistently, does what it says on the tin, and gave me an excuse to try out a load of new web technologies! Want to know more about the technical side of things? Go check out part 2 (Coming Soon), which discusses how I parse the script and figure out which bits need narrating.

If you’ve heard enough, try it out for yourself at Narration.studio! Found a bug? It’s open source on GitHub, so open an issue. Even better, do my work for me and fix it with a PR!

Questions? Feedback? Hit me up on Twitter!